In our last post, we left you with some thoughts about getting better MaxDiff results by using one of its variants. Today, we’ll discuss anchored MaxDiff – one of the improvements to the MaxDiff technique.

Usually a single data point has no significance – unless it’s accompanied by a benchmark. If I tell you that there is 10% growth in the hospitality industry, you may perceive it as a year-on-year increase or as an overall industry trend. But when I tell you that the hospitality industry grew 10% this year — compared to 2% last year — you get a much clearer picture.

It was with this objective of strengthening MaxDiff results that anchoring was introduced.

Why Anchors Are Important to MaxDiff

MaxDiff is a technique used to capture relative preferences for multiple items. Respondents choose the ‘best’ and ‘worst’ of the options shown to them. They do not have the option of saying they prefer ‘none’ of the options or ‘all’ of the options.

When we present MaxDiff results, we know the relationship between all the attributes, but we haven’t measured them against an objective standard.

It may be that feature A is twice as important as feature B, which we would find out from MaxDiff. It may also be that neither A nor B is very important at all. We would not find this out from traditional MaxDiff.

Anchoring can prove very useful in nearly any multiple-option setting. You can use anchored MaxDiff to understand which flavors of ice creams or candies to offer consumers. It can assist in identifying which of a list of bank services customers would use or what features in a loyalty program are most appealing. There are a whole lot of business issues where this method can be applied.

How to Add an Anchor to MaxDiff

When you anchor MaxDiff, you give it the objective standard it traditionally lacks. There are two ways to do this. Both involve asking an additional question about the features being evaluated.

- Dual Response: This method requires an additional response for each task. In an anchored MaxDiff with multiple items, respondents are asked to choose a ‘best’ and ‘worst’ option, similar to a typical MaxDiff exercise. They are then asked to choose if all items are important to them, if none of them are, or if some of them are.

- Direct Binary: This method requires a single extra question that follows a traditional MaxDiff exercise. This question shows all the items at once and asks respondents to select only the items that meet their criteria.

|

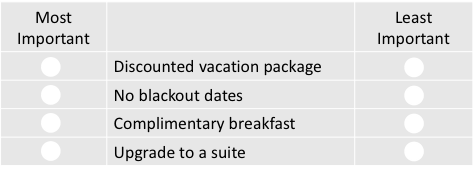

DUAL RESPONSE (On every MaxDiff screen) Please select which loyalty program benefit is most important and which is least important to you: Considering just the above benefits, please state if:

|

DIRECT BINARY (After MaxDiff) Which, if any, of these items are must-haves for you?

|

Either of these anchoring methods can be used with MaxDiff. It is crucial to make the wording of the anchoring question(s) as objective as possible; these statements will define where the anchor falls in the final result. If the statement is too generic, individual bias comes into play and the results can become difficult to interpret.

Using Anchors in Research Findings

The anchor is the ‘threshold’ or ‘null’ point where respondents give zero importance to a feature. It is the minimum point at which a feature reaches the standard set. Just like any other feature, the null point also gets a utility at the end of the exercise. To determine if a feature is important, its utility should be more than the utility of the null vector.

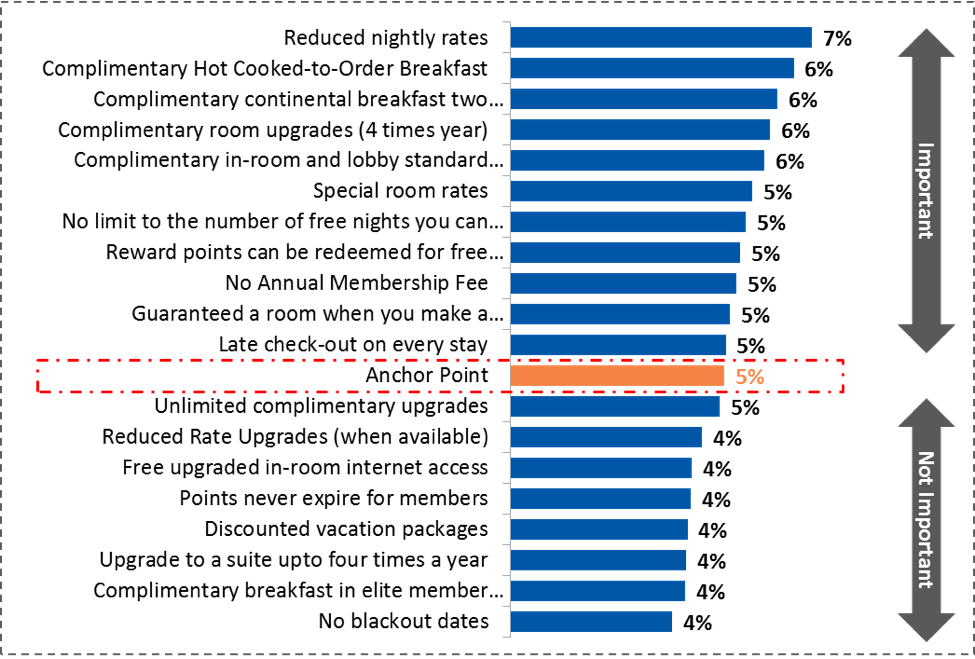

The output of this exercise might look something like this:

The anchor point is the threshold level, which can be set as the benchmark. Anything above it is deemed important, while anything below is less important. It is always easier to explain the relationships between features when numbers are comparable to a benchmark.

The little addition of an anchor has resulted in solving many marketing research problems. Anchoring provides marketers with stronger and more objective findings.

Looking at the above example, the services that are important for any hotel loyalty program can be easily identified. But we couldn’t stop there, because this isn’t just some imaginary example. It’s based on a real situation, where our client wanted us to come up with three importance buckets from a list of 80. Watch how we tackled this problem using Anchored MaxDiff at the SKIM Conference on April 15, 2016. We will be presenting our research findings then, and sharing them with you in our blog after the conference!