Folk Wisdom’s Fallacy | Getting more from less: Few-shot learning

Introduction

Imagine you are given a problem of developing a facial recognition software for an office which has around 100 employees. The first thing that one would do before building a model is to collect lots of images of these employees so as to build a large dataset. However, what if, hypothetically speaking, the employees don’t have enough images and you are left with a very small dataset? It would obviously not be a very good idea to build a convolutional neural network with such low data points. So, should you rule out the feasibility of building a model from less?

Well, in such cases, one can resort to “Few-shot meta learning” – a model that can learn from less!

Meta learning, in its true sense, refers to making a model train on a set of various related tasks with limited number of data points so that with a new similar task, it utilizes the learnings from the previous tasks and doesn’t need training from scratch [1]. Cognitively, human minds work in a similar manner as well i.e. by learning from different experiences and connecting those learnings to a new concept.

The main aim of few-shot learning is to classify something after having known only few of them in the training data. Generically, “N-way k-shot” learning refers to differentiating between N classes with k examples.

What forms the base?

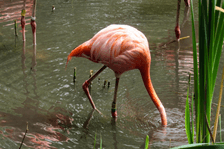

Consider the case of a 3-way 2-shot image classification problem where the main objective is to differentiate between 3 classes of birds where only 2 images of a class of bird are given. What meta learning does is that it breaks down the whole process into different ‘episodes’ – where each episode consists of learning from a dataset, known as the ‘support set’, and then predicting on a dataset known as ‘query set’ [2]. Each support set for this case would include 3 different classes of birds with 2 images of each class and each query set would have 3 different images of these 3 classes of birds. Similarly, different episodes would have different classes of birds in their support sets so that the model learns to discriminate between the different classes of birds well and consequently learns from the related tasks. The test task would be to identify a completely different 3 class set of birds from just 2 images of each.

Support Set for a 3-way 2-shot Meta learning

Query Set for a 3-way 2-shot meta learning

What’s beneath the surface?

There are many approaches to few shot meta-learning and amongst these, one of the ways is to learn embeddings from the images during training that can help in discriminating between the different classes. It is important to note that in all these approaches, the model still gets trained on various instances, but they only have to belong to a related domain as our training example (of which we have only few data points).

In pairwise comparators like ‘Siamese networks’, two input images are taken at a time and fed to two identical neural networks that are used to generate embeddings of the two images. The embeddings are finally passed to an energy function that gives a similarity measure between the two images. In the process of training, random sets of images are passed to the network so that it learns to differentiate between different classes of images.

The other comparators that are often used for learning from embeddings are triplet networks, matching networks, prototypical networks and relation networks.

References

- Ravichandiran, S. (2018). Hands-On Meta Learning with Python. Packt Publishing.

- https://www.borealisai.com/en/blog/tutorial-2-few-shot-learning-and-meta-learning-i/

- https://pixnio.com/

Technical articles are published from the Absolutdata Labs group, and hail from The Absolutdata Data Science Center of Excellence. These articles also appear in BrainWave, Absolutdata’s quarterly data science digest.